They Knew

Who is the Facebook or Instagram of this era? Which AI platforms are being deployed into children’s bedrooms, classrooms and social lives without full transparency about internal research? Which companies are already measuring how certain prompts, filters or recommendation engines affect adolescent self-image, loneliness, or compulsive use?

In a Los Angeles courtroom this winter, a jury is weighing a question that would have seemed unthinkable a decade ago: Did social media companies design products that harmed children -- and did they know it?

A young woman alleges that platforms she used as a minor fueled anxiety, body-image spirals and compulsive use. Executives insist their products are complex, that mental health is multi-factorial, that correlation is not causation. Lawyers debate liability. Parents watch from the gallery.

But the trial is not only about what social media did to one user. It is about what the companies knew.

In 2021, internal Facebook research -- later revealed in whistleblower disclosures -- found that 32% of teenage girls surveyed said that when they felt bad about their bodies, Instagram made them feel worse. A 2019 slide warned: “We make body-image issues worse for one in three teenage girls”.

Some 13% of UK teenage girls and 6% of US teen users surveyed traced suicidal thoughts to Instagram.

These were not outside studies. They were internal.

The cache of documents known as “The Facebook Papers” included 19 separate internal research reports examining youth mental health. The findings were not simplistic. They documented social comparison loops, the amplification of appearance-focused content and the vulnerability of teens already struggling with low self-esteem.

Researchers identified specific content categories -- fashion and beauty posts, influencer culture, celebrity images emphasizing bodies -- that correlated with increased negative comparison. They observed that for teen girls, roughly one-third of viewed content fell into these categories.

They noted that if users sought out eating disorder-related material, algorithms would serve them more of it over time. And they tested interventions.

“Project Daisy,” an initiative to hide public like counts, reduced teens’ fixation on likes and produced measurable improvements in well-being indicators. But it also reduced engagement and ad-related revenue by about 1% on Instagram. The feature was eventually launched in a limited, opt-in form -- and abandoned on Facebook.

The internal tension was clear: Mitigation reduced metrics.

They knew that engagement-based ranking rewarded emotionally charged content. They knew that appearance comparison was worse for teen girls and non-binary teens. They knew that algorithmic design choices could dampen or amplify harm.

And yet, as whistleblower Frances Haugen testified, conflicts between safety and growth were “consistently resolved in favor of growth.” The companies argued publicly that the relationship between social media and mental health was complex.

That is true. The internal studies themselves acknowledge benefits: Connection, identity development, community. But complexity does not erase knowledge. When internal research shows that a significant percentage of teens report worsened body image, increased anxiety and heightened suicidal ideation -- and when executives choose to preserve engagement features that exacerbate those outcomes -- that is not ignorance. That is prioritization.

The courtroom trial may hinge on proximate cause and legal thresholds. But the broader moral case has already been written in internal slides and A/B test results. We failed our kids in the age of social media. We allowed platforms to scale to billions of users before demanding transparency. We allowed engagement metrics to define success.

We accepted parental controls and PR campaigns as substitutes for structural reform. We told ourselves that the science was unclear while the companies’ own researchers were documenting harm.

That ship has sailed. Now we are embarking on a new era -- the era of generative AI.

If social media optimized for attention, AI optimizes for cognition. It can tutor, persuade, simulate friendship, generate images of impossible perfection, and tailor content to psychological vulnerabilities with unprecedented precision.

Who is the Facebook or Instagram of this era?

Which AI platforms are being deployed into children’s bedrooms, classrooms and social lives without full transparency about internal research? Which companies are already measuring how certain prompts, filters or recommendation engines affect adolescent self-image, loneliness or compulsive use?

Which executives are debating whether safer defaults would reduce engagement and revenue? Are we vigilant enough?

Are regulators demanding access to internal data before harm becomes systemic? Are lawmakers prepared to scrutinize training data, recommender systems and monetization models before they become too entrenched to reform? Or will we wait for the whistleblower of the AI era -- the internal memo that says: “We make anxiety worse for one in four teen users” -- before we act?

Silicon Valley has demonstrated a pattern: Innovate rapidly, scale globally, study harms internally, mitigate selectively, defend publicly.

The technology changes. The incentives remain.

The question before us is not whether AI can benefit children. It can. The question is whether we will allow the same misalignment between profit and protection to define this new era.

Are we vigilant enough? Are governments vigilant enough?

Or are our children going to be defeated by Silicon Valley’s greed once again -- this time by systems even more powerful than the last?

They knew.

This time, will we?

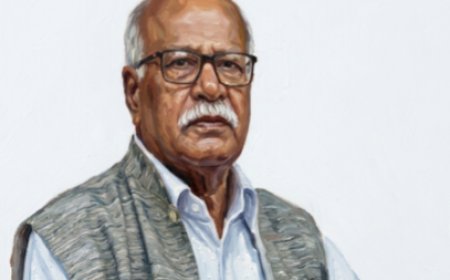

Omar Shehab is a theoretical quantum computer scientist at the IBM T. J. Watson Research Center, New York. His work has been supported by several agencies including the Department of Defense, Department of Energy, and NASA in the United States. He also regularly invests in the area of AI, deep tech, hard tech, and national security.

What's Your Reaction?